PS5: Neural Networks

This problem set implements an image classifier with a neural network. You will implement a network with a single hidden layer to solidify your understanding of the algorithms. PS6 will use off-the-shelf toolkits to facilitate more complex, deeper architectures that are difficult to code from scratch.

As in all problem sets, you may work in pairs (and are, in fact, encouraged to do so). Use the search for teammates post on Piazza to find partners.

There is no analysis or data loading this time, and not much volume of code, but you may find that the algorithm is more involved than the ealier ones. Aim to start early! You can work on Part A after Monday's class, and Part B after Thursday.

Objective

- Implement a neural network architecture with a single hidden layer

- Understand the backpropagaton algorithm and gradient descent updates

- Understand how neural network hyperparameters affect performance

Setup

Click the repository link at the top of the page while logged on to GitHub. This should create a new private repository for you with the skeleton code.

If you are working in a pair, go the Settings > Collaborators and Teams in your repository and add your partner as a collaborator. You will work on and submit the code in this repository together. Each pair should only have one repository for this problem set; delete any others lying around.

Clone this repository to your computer to start working. Commit your changes early and often! There's no separate submission step: just fill out honorcode.py and README.md, and commit. The last commit before the deadline will be graded, unless you increment the LateDays variable in honorcode.py.

See the workflow and commands for managing your Git repository and making submissions.

Part A: Forward Pass [15 pts]

Code for this section is to be written in singlenn.py,

and can be completed after Monday's class.

Your code will compute the activations after each layer

of a neural network,

the loss at the final layer,

the predictions for a dataset,

and the accuracy.

Follow the instructions in the file

to implement the forward, predict,

accuracy, and loss methods.

Since we haven't seen regularization loss until Thursday, your loss method can implement the prediction loss only.

An automated tester for Part A is in your repositories. Execute it by running

python tester.py parta_noreg.picklefor a model without regularization loss. Once you have implementated regularization loss, run

python tester.py parta_reg.pickle

Part B: Backpropagation and Training [20 pts]

Follow the instructions in singlenn.py

to implement the backward and train

methods.

An automated tester for toy data will be made available on Tuesday; check your repositories.

Part C: Tuning the Network Hyperparameters [5 pts]

Once you have the above two sections working on the provided toy data, you are ready to run it on the CIFAR image dataset. There is not much code for this section, other than optionally automating hyperparameter tuning with grid search.

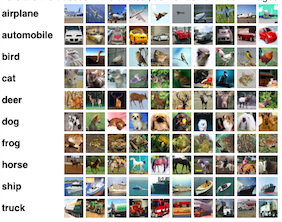

CIFAR-10 is a standard benchmarking dataset in computer vision, consisting of 32x32 color images from 10 different classes ("airplane", "automobile", "bird", etc). This is similar to the MNIST digit recognition task, but with color images that exhibit more variation and are hence harder to classify than digits.

Download the data linked from the site,

uncompress it, and save the resulting cifar-10-batches-py

folder in your repository clone.

Do not commit the folder.

Execute cifar.py, which will load the CIFAR-10 data, split it into

training, dev, and test, train a neural network with

some hyperparameters on the training set,

and evaluate its accuracy on development set.

Explore other hyperparameter options to create a best_net

network with better accuracy on the development set.

You should be able to get above 45%, and possibly even more.

Complete honorcode.py and README.md

and push your files by Thu, Apr 13th at 11:00 pm EST